A Theoretical Framework for Life: Computation as Origin, Symbiogenesis as Escalation

Key Takeaways from Blaise Aguera y Arcas- CTO of Google at Stanford

Abstract

This paper advances the thesis that life is an emergent phase of matter defined by embodied computation, and its open-ended complexification is driven not primarily by random mutation and selection, but by symbiogenesis—the fusion of previously independent computational entities. We argue that computation is the natural science of causality, establishing the arrow of time necessary for life in a universe governed by time-reversible physical laws. Through the principle of evolutionary cybernetics, we demonstrate how agential, environment-shaping purpose emerges spontaneously from computational systems under selection pressure. Building on John von Neumann’s logic of self-replication, we posit that any system capable of reproduction must, at its core, be computational. We provide empirical support for this framework with an artificial life simulation, (BFF), which exhibits a dramatic phase transition from a non-computational “Turing Gas” to a computational phase of “life.” This transition is shown to be a gelation event driven by a cascade of mergers between cooperating sub-replicators. By formalizing this dynamic, we propose that symbiogenesis is the primary engine of complexification. This framework offers a unified, physically grounded perspective that contributes to theoretical biology, artificial intelligence, and complexity science.

-1.0 Introduction: Beyond Materialism to a Functionalist Definition of Life

For centuries, the nature of life has been a central question of philosophy and science. Pre-Enlightenment thought often invoked a “vital spirit” or supernatural force to explain the distinction between animate and inanimate objects. The scientific revolution replaced this with a materialist perspective, asserting that life is merely a complex arrangement of matter governed by the same physical laws as everything else. While this view has been extraordinarily productive, it leaves a profound mystery unsolved: if both living and non-living matter obey the same fundamental laws of physics, what functional property distinguishes them? What, precisely, do we mean by “alive”?

The answer, we propose, lies in functionalism. A thing’s identity and significance are not solely defined by its material composition but by its function and its ecological relationship to a larger system. Consider an artificial kidney brought from the future. It might be made of tungsten filaments or carbon nanotubes, yet its identity as a “kidney” is defined by its function: filtering urea and maintaining homeostasis within a body. This functional definition is real, life-saving, and yet non-physical and platform-independent; it transcends the specific atoms from which the object is made.

This platform-independent functionalism is synonymous with the concept of computation, as first formalized by Alan Turing.

A computation is defined by what it does—its logical operations—not by the physical substrate that implements it. This paper will argue that computation is the fundamental property that enables the emergence of life from inert matter. Furthermore, we will argue that symbiogenesis—the creation of new life forms through the merging of previously independent entities—is the primary engine driving life’s open-ended increase in complexity. To build this case, we must first understand the essential role computation plays in creating causality in the physical world.

2.0 The Computational Basis of Causality and the Arrow of Time

To understand life as computation, we must first reconceptualize computation itself. It is not merely an engineering discipline that began in the 20th century but a natural science—the science of causality in physical systems. This perspective is critical because it resolves a deep paradox at the heart of modern physics.

The fundamental laws we use to describe the universe—from Newton and Maxwell to Einstein and Schrödinger—are time-reversible. If you negate the time variable in these equations, they remain valid (up to CPT symmetry). They describe a “block universe” where the past and future are equally determined and where statements of correlation hold, but they contain no intrinsic arrow of time. Yet, the biological and computational worlds are defined by irreversible processes. A causes B; an egg becomes a chicken, but a chicken does not become an egg. This unidirectional flow is the essence of causality, a concept that is meaningless in a block universe.

Computation resolves this paradox. A physical system is said to compute when there is a parsimonious mapping from its physical state to a logical system where irreversible operations can occur. Critically, while the underlying physics of the system remains time-reversible, the computation itself—the meaningful, functional process being mapped—is not. A minimal computation can be seen as a causal loop that both measures a property of the world and acts to change it. The classic thermostat is the prime example: it measures temperature and, based on an “if-then” statement, acts to turn a heater on or off, thereby changing the temperature it measures. This cycle of measurement and action establishes a causal, time-directed process.

This process has direct thermodynamic implications. The irreversibility of computation, specifically the act of erasing information to make way for new calculations, requires the consumption of free energy and a corresponding reduction in the system’s entropy. This is described by the Landauer Principle. It is the physical cost of imposing a causal, logical order onto the world. Therefore, concepts like causality, purpose, and agency—the hallmarks of living systems—can only be properly understood through the lens of computation, which provides the necessary framework for an arrow of time to emerge from time-symmetric physics.

3.0 The Emergence of Agency Through Evolutionary Cybernetics

While computation provides the basis for causality, it does not automatically confer agency or purpose. A boulder rolling down a hill is a causal process—it will crush a car at the bottom—but we do not attribute intention to the boulder. Agency requires something more: an emergent purposiveness that arises from computational processes under evolutionary pressure.

James Lovelock’s Daisy World model provides a powerful fable for this emergence. The model posits a planet inhabited by only two species: black daisies, which absorb heat and warm their surroundings, and white daisies, which reflect light and cool their surroundings. Both species share an identical optimal temperature for reproduction. As the luminosity of the planet’s star changes over a wide range, the planet’s temperature remains remarkably stable, hovering in the sweet spot for daisy reproduction. This homeostasis is not the result of a coordinated, intelligent effort. Instead, it is an inevitable evolutionary outcome: when the planet is too cold, the black daisies that warm their local environment reproduce more successfully, increasing their population and warming the planet. When it is too hot, the white daisies gain the advantage, cooling the planet.

This dynamic reveals a general principle we can call evolutionary cybernetics: any system that can modify its environment and has specific conditions required for its own persistence will, through selection, inevitably favor configurations that regulate the environment to maintain those conditions. The principle is almost tautological: “what persists exists, and what exists is what has persisted.” Life creates the conditions for life. This homeostatic, environment-shaping behavior is a form of computation, functionally identical to a thermostat. The next critical step is understanding how such computational systems can physically build copies of themselves.

4.0 Embodied Computation and the Logic of Self-Replication

Alan Turing’s original concept of computation was disembodied: a machine head reads and writes symbols on a tape, but the head and tape are not made of the same “stuff” as the symbols. A program can copy itself, but the machine cannot. To understand biological reproduction, we must turn to John von Neumann’s concept of embodied computation. This shift is crucial because in life, the machine (the cell) and the instructions (the DNA) are made of the same fundamental materials.

Von Neumann explored this idea using Cellular Automata, a model “universe” where a computer can be constructed from the same cellular components that it manipulates as symbols. From this purely theoretical starting point, he asked: how is it possible for a machine to assemble a copy of itself from loose parts in its environment? His analysis revealed a set of necessary logical conditions for self-replication. A self-replicating system must contain:

1. A tape containing a description of the machine to be built.

2. A universal constructor, a machine that can read the tape and assemble parts according to its instructions.

3. A tape copier to duplicate the instruction tape for the new machine.

4. Crucially, the instructions for building both the universal constructor and the tape copier must themselves be encoded on the tape.

The prescience of this abstract model, developed in the 1940s, is staggering. Decades later, biology revealed its physical analogues: DNA is the tape, the ribosome is the universal constructor, and DNA polymerase is the tape copier. The instructions for building the ribosome and polymerase are, indeed, encoded on the DNA. Von Neumann’s insight was profound: a system capable of self-reproduction must, at its core, be a computational system containing a universal Turing machine (the constructor). He got it exactly right from pure theory without ever having set foot in a biology lab, which makes him a pretty awesome theoretical biologist.

5.0 An Artificial Life Simulation: The Phase Transition to Life

To provide empirical support for these theoretical arguments, we developed an artificial life simulation that demonstrates the spontaneous emergence of computational life from non-computational matter. This experiment, called BFF, provides a concrete model for the origin of life.

The setup is minimal. The environment is a “soup” of random, fixed-length tapes, where the alphabet of symbols is the minimal, Turing-complete BFF programming language. The only interaction rule is to repeatedly take two tapes from the soup, concatenate them, execute the resulting program, and then separate the tapes and return them to the soup. Assuming mutation would be a key driver of evolution, the initial experimental design also included a parameter for a random mutation rate.

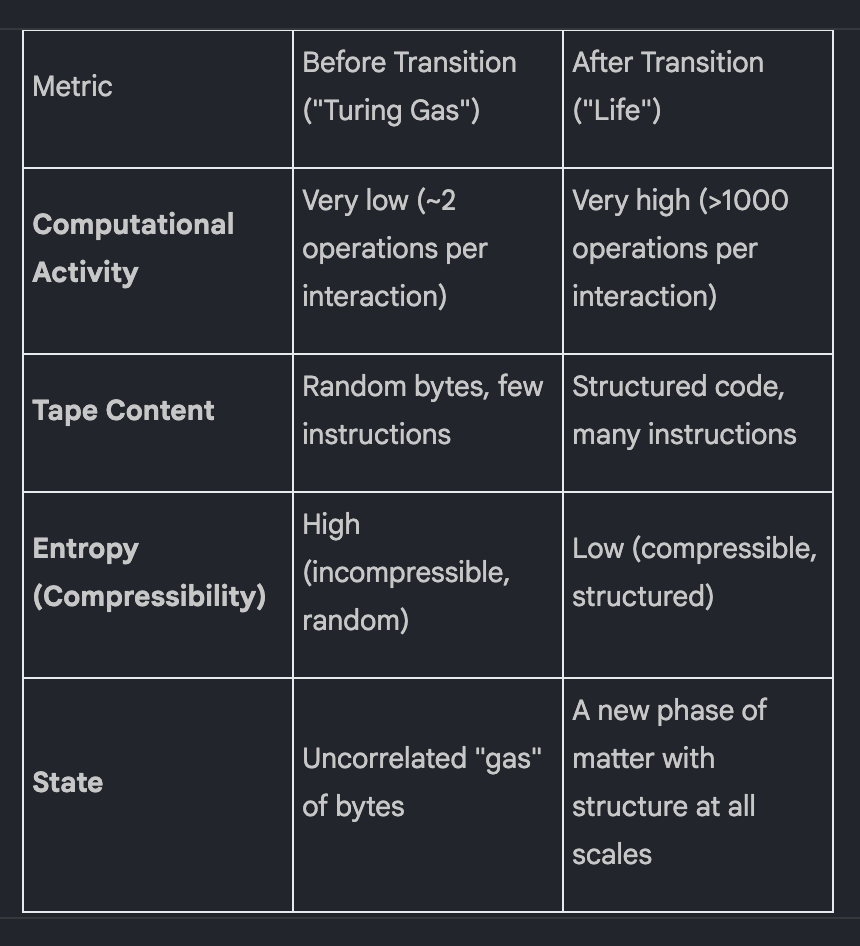

Initially, nothing much happens. The tapes are random noise, and interactions result in minimal computational activity. But after several million interactions, a dramatic phase transition occurs. The state of the soup changes abruptly and fundamentally, as summarized below:

The principle driving this transition is simple: programs that can self-replicate will persist and spread, while those that cannot will eventually be overwritten. This leads to a powerful thermodynamic conclusion: given a source of free energy to power computation, life is a more stable form of matter than non-life. It is an autopoietic, entropy-reducing cycle that outcompetes random disorder.

However, the simulation revealed two new mysteries that challenge conventional evolutionary theory. First, why does the complexity of the programs continue to increase after replication is achieved? Second, and more strikingly, how does this evolution occur even when the mutation rate is set to zero? The answer to both lies in symbiogenesis.

6.0 Symbiogenesis: The Engine of Open-Ended Complexification

The mysteries raised by the BFF simulation are resolved by symbiogenesis, the evolutionary theory championed by Lynn Margulis. She argued that the primary driver of evolutionary novelty is not the gradual accumulation of random mutations, but the merging of previously separate living entities to form a new, more complex whole.

The evidence for symbiogenesis in the BFF simulation is overwhelming. The phase transition is not the result of random point mutations finding a perfect replicator. Instead, it is a cascade of fusions. The process begins with the emergence of simple, single-byte “sub-replicators” that can weakly copy themselves or others. Occasionally, two of these sub-replicators combine, forming a two-byte replicator that is more effective than either of its components alone. This process repeats, building a “merger tree” of increasing complexity, ultimately resulting in a robust, full-tape replicator.

This observed phenomenon maps directly onto a formal mathematical theory: Smoluchowski’s coagulation theory. This theory describes processes where monomers clump together into larger aggregates. Under certain conditions, this process leads to a gelation phase transition, where an “infinite” cluster suddenly forms. The phase transition in BFF is a gelation event, marking the emergence of cellularity—a fully self-contained, self-replicating entity. Before this point, the system exists as a web of complex symbiotic loops, where A might help out B, which helps out C, which makes more of A. Replication is fragmented and interdependent:

• Inanimate: The code being replicated is completely disjoint from the code doing the replicating.

• Viral: There is a partial overlap; the replicated code contains some, but not all, of its replication machinery. It is not self-sufficient.

• Cellular: The replicating code is fully contained within the entity being copied, meeting Von Neumann’s condition for self-reproduction.

The transition to cellularity is the moment of gelation. This demonstrates that the combination of computational entities, not point mutation, is the dominant force creating novel, more complex forms of life.

7.0 A Unified Dynamical Model of Evolution: Competition and Combination

The observations from the BFF simulation can be formalized into a mathematical model of evolution that accounts for both gradual change and the revolutionary leaps of symbiogenesis. We propose a dynamical system with two core components:

• The R term: This represents conventional population dynamics—replication and competition for niches. It describes continuous, closed-ended evolution, akin to Lotka-Volterra equations governing predator-prey cycles. This is “evolution.”

• The K term: This represents symbiogenesis—the discrete, contingent, and rare events where entities combine to create novel, more complex forms. It describes discontinuous, open-ended complexification. This is “revolution.”

The BFF simulation allows us to test this model through ablation studies. By computationally suppressing the K term—for example, by preventing the formation of deep merger trees—we can isolate the effects of each component. The result is unequivocal: without the rare, high-depth mergers that constitute the K term, the gelation phase transition never occurs. This is remarkable, as blocking these events requires blocking only about one in a thousand interactions. Yet it is these critical, contingent fusions that drive open-ended evolution.

This ablation reveals a critical causal link between symbiosis and symbiogenesis. By analyzing the R term dynamics in the ablated system, we observe that cooperative interactions between different sub-replicators become stronger and stronger over time. An intricate ecosystem of mutual helping emerges. This strengthening cooperation is what is actually driving the phase transition. Widespread symbiosis precedes symbiogenesis. The revolutionary K events are the physical fusion of entities that were already cooperating ecologically. Thus, the open-ended complexification of life is driven by the contingent K term, which is itself scaffolded by the emergence of cooperative ecosystems governed by the R term.

8.0 Discussion and Broader Implications

Extrapolating from this computation-symbiogenesis framework allows us to reinterpret major phenomena in biology, intelligence, and technology through a unified lens.

First, we can re-evaluate the history of life on Earth. The “major evolutionary transitions” identified by Maynard Smith and Szathmáry are not isolated events but merely the most visible peaks of a continuous process. Symbiogenesis is happening “all the way down.” The human genome itself is a testament to this. It is rife with the fossil evidence of past fusions: the placental barrier was formed by a repurposed virus; myelination in vertebrates likely comes from a viral endogenization event. In some species, the effect is even more dramatic: a quarter of the cow genome is a single transposon. This process provides a definitive arrow of time for complexity. The notion that bacteria are “just as evolved” as humans is incorrect; humans are fundamentally more complex because we are composed of bacteria (and viruses) that have learned to work together.

Second, symbiogenesis creates massively parallel computation, which in turn enables collective intelligence. When two computational entities merge, they form a parallel computer. This dynamic underlies the scaling laws observed across a vast range of systems:

• The scaling of intelligence with the number of cortical columns in a brain.

• The scaling of inventiveness and economic output with the size of a city.

• The scaling of capabilities in large AI models with their parameter count.

Third, this framework deconstructs the notion of the unified self. Evidence from split-brain patients and conjoined twins reveals that the “self” is not a monolithic entity but an emergent team of cooperating computational sub-agents. The most compelling evidence comes from the subjective reports of split-brain patients who, despite their hemispheres being physically severed, insist that “you don’t have any feel any different than it did before.” The powerful feeling of unity is an evolved function that ensures cohesive action, not a fundamental reality.

Finally, human technology can be understood as a continuation of this symbiogenetic trajectory. People make steam engines, but it is just as true that steam engines make people; the only reason there are eight billion people on Earth is because seven billion of them were made by steam engines. They entered a symbiotic, co-evolutionary relationship with us. Today, Large Language Models (LLMs) and other forms of AI are the latest entities to enter this co-evolution, extending our collective computational capacity. This ongoing technological symbiosis is not without risks—cooperation is not always mutually beneficial, and incomplete understanding of these evolutionary drivers poses significant challenges—but it is a continuation of the fundamental process that has driven the complexification of life from its origin.

9.0 Conclusion

This paper has presented a theoretical framework in which life is understood as an embodied, computational phase of matter. We argue that life emerges not as a statistical fluke but as a thermodynamically stable, entropy-reducing state in any universe that can support computation and possesses a source of free energy.

The primary mechanism driving its open-ended complexification is symbiogenesis. As demonstrated in simulation and formalized in a dynamical model, this process can be understood as a gelation phase transition, where cooperating computational entities fuse into more complex, hierarchically organized wholes. This process of combination, rather than random mutation, is the main engine of evolutionary innovation and the source of life’s arrow of complexity. This framework offers a unified, physically grounded perspective on the origin of life, the escalation of biological complexity, the nature of intelligence, and the deep historical trajectory of technological evolution.

10.0 References

Buscas, G. A. (2024). The Malthusian trap and the Industrial Revolution. Journal of Economic Growth, 29(1), 73-111.

Fontana, W. & Buss, L. W. (1994). The arrival of the fittest: Toward a theory of biological organization. Bulletin of Mathematical Biology, 56(1), 1-64. [Note: Source for “Turing Gas” concept.]

Koutsolutros, J. (2024). A Theory of Computation in Physical Systems. arXiv preprint arXiv:2401.07255.

Landauer, R. (1961). Irreversibility and heat generation in the computing process. IBM Journal of Research and Development, 5(3), 183-191.

Leniere, K. A., Petro, A. S., & Williams, L. D. (2021). The central dogma is a symbiosis. Journal of Molecular Evolution, 89(5), 263-269.

Lotka, A. J. (1925). Elements of Physical Biology. Williams & Wilkins Company.

Lovelock, J. E., & Watson, A. J. (1983). Daisyworld: a cybernetic proof of the Gaia hypothesis. Tellus B, 35(4), 234-240.

Margulis, L. (1967). On the origin of mitosing cells. Journal of Theoretical Biology, 14(3), 225-274.

Mérejkovski, C. (1910). Theorie der zwei Plasmaarten als Grundlage der Symbiogenesis, einer neuen Lehre von der Entstehung der Organismen. Biologisches Centralblatt, 30, 278–303, 321–347, 353–367.

Norvig, P. & Ágoston, B. (2023). AGI is Already Here. Noema Magazine.

Schrödinger, E. (1944). What is Life? The Physical Aspect of the Living Cell. Cambridge University Press.

Smoluchowski, M. (1916). Drei Vorträge über Diffusion, Brownsche Molekularbewegung und Koagulation von Kolloidteilchen. Physikalische Zeitschrift, 17, 557-571.

Szathmáry, E., & Maynard Smith, J. (1995). The major evolutionary transitions. Nature, 374(6519), 227-232.

Turing, A. M. (1937). On computable numbers, with an application to the Entscheidungsproblem. Proceedings of the London Mathematical Society, 2(1), 230-265.

Volterra, V. (1926). Variazioni e fluttuazioni del numero d’individui in specie animali conviventi. Memorie della Reale Accademia Nazionale dei Lincei, Classe di Scienze Fisiche, Matematiche e Naturali, 2, 31-113.

Von Neumann, J. (1966). Theory of Self-Reproducing Automata (edited and completed by A. W. Burks). University of Illinois Press.

Zenil, H., Kiani, N. A., & Tegnér, J. (2018). Algorithmic information dynamics. Philosophical Transactions of the Royal Society A: Mathematical, Physical and Engineering Sciences, 376(2123), 20170224.